From the launch of ChatGPT to the iterations of presidential executive orders on artificial intelligence (AI), local governments can see firsthand the importance of this breakthrough technology now more than ever.

Andrew Ng once said, “AI is the new electricity,” which makes good sense as it has shown great potential in revolutionizing many aspects of our work (e.g., the productivity gains by automating call center tasks, smarter infrastructure management through predictive maintenance of roads and water systems, and real-time monitoring for potential threats on social media.

To help AI systems achieve their potential, we need good data. According to the European Union Agency for Fundamental Rights, algorithms used in AI systems can only be as good as the data used for their development. High-quality data can also mitigate bias and error and protect people’s fundamental rights when operating AI systems. Yet, the call for high-quality data is often hit-or-miss or lacks clarity and actionability. Before I delve into the suggestions for improving the data quality, I will share some context.

Data Governance and Data Literacy

Data governance provides organizational oversight of data management. When an organization has good data governance, leaders of the organization have a good sense of data quality and have a place to go to for data-related issues and questions such as data availability, data access, data security, regulatory compliance, and much more.

While data governance takes a top-down approach by setting organizational standards or approaches for managing data, data literacy takes a bottom-up approach by developing critical individual capacity to generate, collect, store, analyze, use, and share data—the various practices to manipulate data. Both are equally important for developing high-quality data.

But according to the State Chief Information Officer (CIO) 2023 Survey from the National Association of State Chief Information Officers (NASCIO), the majority, 69% of the state IT chiefs, reported they were only in the “beginning stages” of their data governance structure. The survey also revealed that 84% of states surveyed did not have a formal data literacy program.

As I reflect on these survey results, I continue to wonder how local governments compare to their state counterparts—worse off, perhaps? Quite likely. Unless similar research is done with local CIOs, this will only continue to be my best guess.

Data Quality and AI

Reading up to this point, you may have already gotten a sense that the organizational AI effort can go a long way when it has good data. But how does data quality affect the adoption of AI? Let me explain.

According to the AI Accountability Framework released by the Government Accountability Office,

Data used to train, test, and validate AI systems should be of sufficient quality and appropriate for the intended purpose to ensure the system produces consistent and accurate results.

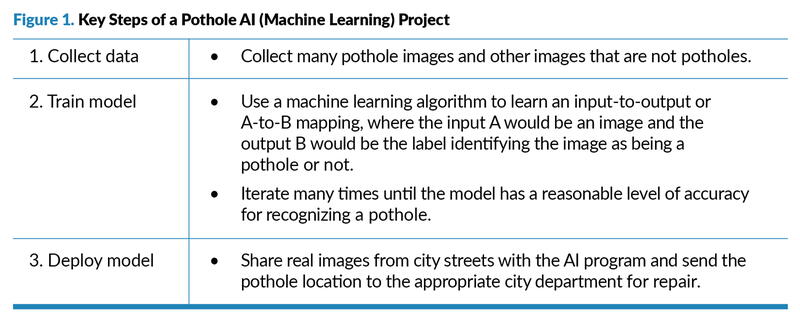

AI-based machine learning models begin with data and infer rules or decision procedures that predict outcomes. If your city were to develop an AI tool for pothole recognition, Figure 1 describes a simplified process for the project.

As shown in Figure 1, this pothole AI project uses two types of images (pothole vs. non-pothole) as source data and comes up with image recognition algorithms to help identify potholes in city streets. When the algorithms are accurate and the real images from city streets are of high quality, the repair crew can have reliable pothole location information and save much travel time by going directly to the places that have potholes and repairing them to make city streets safer.

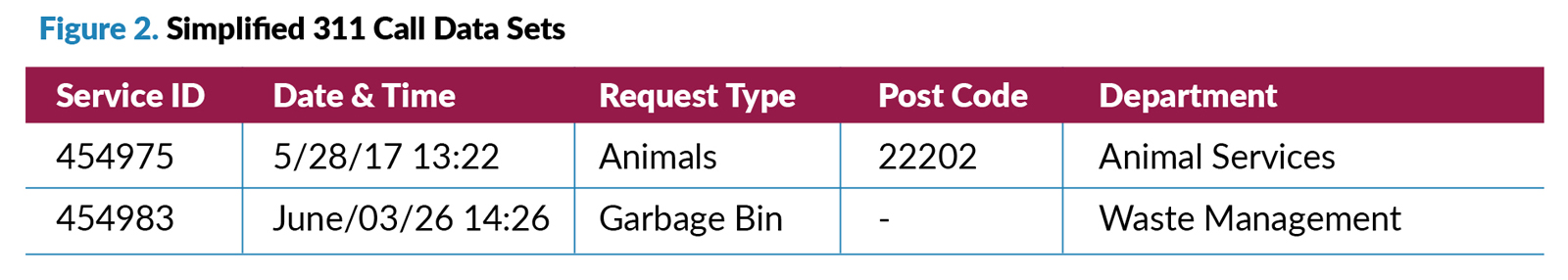

In fact, AI is applied at least as much to tables of data (i.e., structured data) as to images, audio, and text (i.e., unstructured data). Let’s say one of your AI projects was asked to predict service call patterns and staffing levels for the upcoming week and they are given the data as shown in Figure 2. What issues might they run into?

They are going to run into three issues:

• Consistency: The call date and time are presented inconsistently between the two data entries.

• Accuracy: The second entry, call 454983, has a recorded date of “June/03/26,” which is in the future, so it can’t be the actual date the call happened.

• Completeness: Call 454983 doesn’t record the post code.

So, one of the first activities many AI teams would undertake is data cleaning and organization. According to CrowdFlower, data scientists spend about 60% of their time cleaning and organizing data. Imagine if a good portion of that time could be saved or repurposed.

What You Don’t Know About the DATA Act

Now, let’s circle back on ways to improve data quality. There has been an extraordinary piece of work done at the federal level, the Digital Accountability and Transparency Act of 2014 (i.e., the DATA Act), which has standardized and published accessible and machine-readable federal spending data through USASpending.gov. The goal of the act was to increase the accessibility, quality, and usefulness of online information regarding federal spending. The implementation of the act included three main bodies of work:

• The development of a consistent government-wide data standard.

• The development the data collection and validation tool.

• The development of USASpending.gov to display the data and facilitate public use.

The U.S. Treasury Department used agile methodology in each of these critical efforts, featuring rapid development and deployment and then incrementally, iteratively, and flexibly enhancing the work over time. Led by Amy Edwards Holmes, the deputy assistant secretary of accounting policy and financial transparency at the time, this effort was documented and published by the National Academy of Public Administration.

What can be learned from this journey if we were to pursue a similar approach in local government? The good news is we don’t have to come up with a solution that needs to track more than $4 trillion (in 2014) in annual spending on a quarterly basis (the original scope of the DATA Act), but we do have to come up with tools that can address the data fundamentals citywide. And similar to the federal government, we will also need to address the uneven amounts of effort across departments.

Every department is unique, in terms of its service offerings, staffing, data maturity, and even buy-in. It requires the approach to meet departments where they are and help them move along.

Last and perhaps most critically, stakeholder engagement matters. Stakeholders, such as elected officials, academia, community partners, residents, and cross-agency staff, must play an important role in this process, not only supporting the development and adoption of the required data standard but also adopting a user-centered design principle through their unique, diverse, and critical contribution to this work.

Citywide Data Strategy

We have already seen successes coming off the aforementioned approach. In December 2023, the city of Seattle released its One Seattle Data Strategy: 2024–2026, “a three-year vision and action plan to advance our use of data, scale data excellence across the city, and achieve better and more equitable outcomes for residents.” The strategy has identified four key pillars to address: data quality and governance, data literacy and culture, data use and equity, and data and community engagement, representing a holistic approach to data.

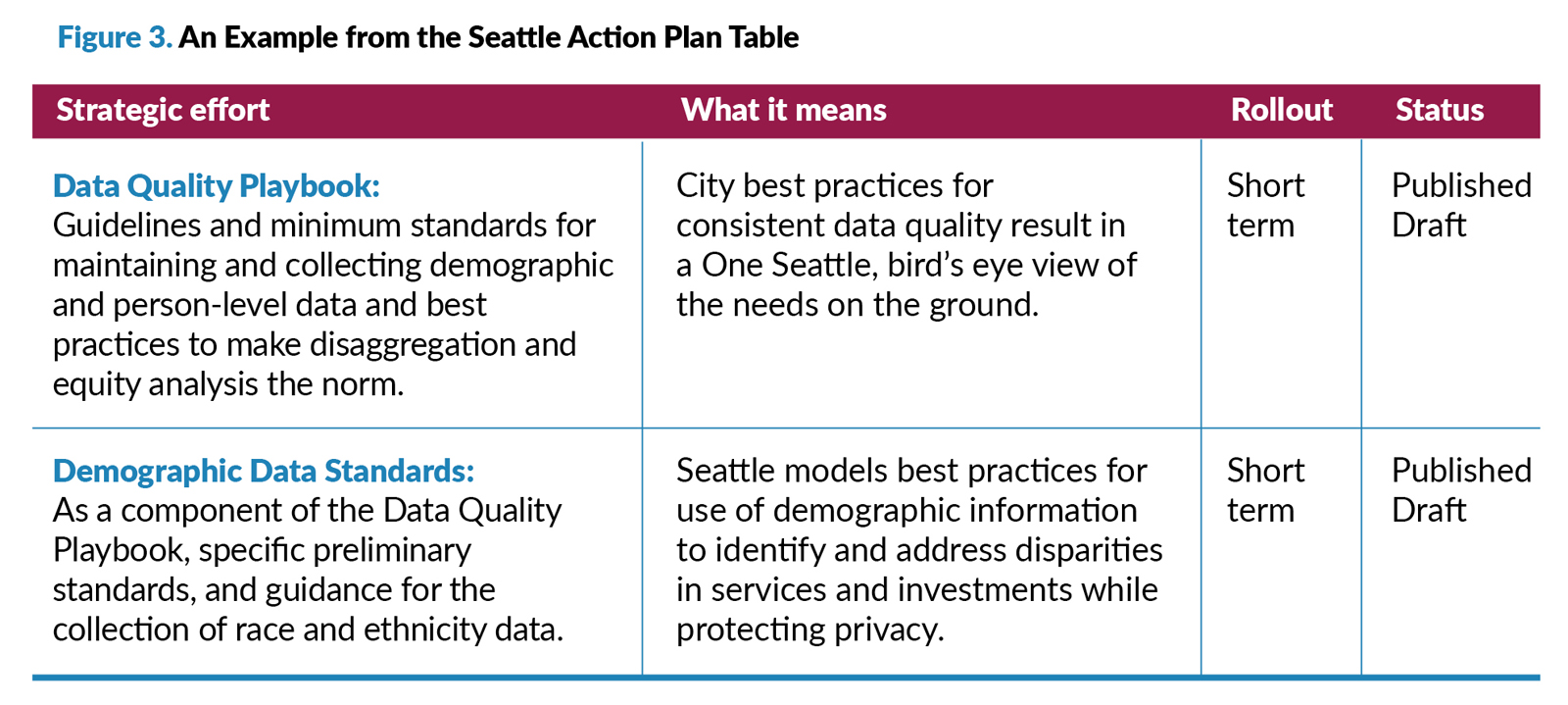

The strategy starts off with a critical question—Why does Seattle need a data strategy?—to set a tone for this work. The document further responded with a two-part answer: a series of organizational and community goals come with data and then a series of opportunities when marrying data with values (e.g., Data + Innovation, Data + Collaboration, and Data + Equity and Inclusion, etc.) To make this work actionable, the strategy has identified 19 projects, policies, and processes between 2024 and 2026 along with associated timing (in short, medium, and long terms) and statuses (see Figure 3), and presented 20 examples of how the teams have advanced the use of data and achieved better and more equitable outcomes for residents.

Conclusion

We’ve heard it time and time again: Rome wasn’t built in a day, and similar to that, the work of data and AI cannot be accomplished overnight. If your goal is to improve residents’ lives and build a sustainable community through transformative tools like AI, then starting from a strong foundation, with basics such as implementing citywide data strategies, could help you go a long way. The work in Seattle and in the federal government speaks to this.

Now, the real question is, how could you leverage this approach and build a culture of data and data-informed decision-making in your own organization and beyond?

KEL WANG is manager of applied data practices for Bloomberg Center for Government Excellence at Johns Hopkins University.

Local Government Reimagined Conference - The AI Edge: Orlando, FL | April 8-10, 2026

What's actually working with AI in cities and counties like yours? Hear from practitioners navigating the pitfalls, finding the wins, and separating hype from reality.

Session Spotlights:

- Navigating the Ethics and Politics of Municipal AI: A Practitioner Roundtable

- Bridging Silos: How We Can Use AI to Unlock Smarter, Safer, and Healthier Communities

- Mobile Workshop: The Institute for Simulation and Training at the University of Central Florida. Learn More

Early Bird Ends February 23 – Save $100-$200

VIEW ORLANDO SCHEDULE | REGISTER FOR ORLANDO

New, Reduced Membership Dues

A new, reduced dues rate is available for CAOs/ACAOs, along with additional discounts for those in smaller communities, has been implemented. Learn more and be sure to join or renew today!